Twitter is the new Facebook, and Substack is the new Twitter: Life after the ‘peak algorithm’

It's not the algorithm. What's ruining platforms is irrelevance.

‘Be without fear in the face of your enemies. Be brave and upright that God may love thee. Speak the truth always, even if it leads to your death. Safeguard the helpless and do no wrong. That is your oath’.

— Ridley Scott, The Kingdom of Heaven

I have a theory that I suspect will be controversial — one that may even cause offence. But I find myself increasingly convinced that Meredith Whittaker is entirely right when she argues that failing to tell the truth, and failing to uphold it consistently, makes us stupid. Because it forces us to operate intellectually inside a charade — one that ends up atrophying our reasoning, as we build castles of pretended certainties that are nothing more than dogma.

Of all the dogmas of our culture, perhaps the most powerful is that of absolute evil. When something frightens us, we rush to invent a monster in order to exorcise it — so that the terror is no longer inside us, but inhabits an external body we can blame, fear, and hate.

And so, while we lived at the mercy of the natural world, the Yeti, the Loch Ness Monster, Bigfoot, and the Chupacabra were among the many variations of a single universal fear of nature. Later, vampires were the invention of a society traumatised by plague and tuberculosis. And when the world began to fear progress, it conjured up the creature of Doctor Frankenstein. Since it is usually the unknown that frightens us most, medieval mapmakers would typically inscribe the unexplored regions of their charts with a warning: Hic sunt dracones. There are always dragons in what we cannot yet explain.

We, the human beings of the twenty-first century, living in terror of the phenomenon of digital interconnection, have also invented the demon of our time. A being without a body, without a face, without a soul, which we have called “the algorithm.”

And since, at bottom, what frightens us most is not monsters but other human beings, we have convinced ourselves that this being is a weapon of mass destruction in the hands of creatures so malevolent they would have nothing to envy of Dracula: the tech bros. We have persuaded ourselves that, in their hands, social media will be the ruin of humanity — the hell in which children will burn and women will be violated.

But monsters, you will agree with me, do not exist. On the contrary: every change that has ever frightened us has always been driven by a social phenomenon — by a plurality of interests, actors, and circumstances too complex to reduce to a monster.

And so today I am wading into dangerous territory, to argue against the widely held dogma that everything wrong with the world is the fault of social media. More than that: I maintain that social media, as we have known it, is dead. Or at least on its way out. In all likelihood, the children of a few years from now will have no interest whatsoever in using it.

And I do not believe it has been killed by the tech bros — but by the same agent that finished off Facebook: ordinary people. Or, put another way: it is not the algorithm. What is ruining the platforms is an invasion of users who have nothing to say.

Let me explain.

The first social networks — Twitter, Facebook, Reddit, Tumblr, LinkedIn — were built on an expectation of horizontality. Facebook promised to connect everyone with everyone else. Twitter occupied an adjacent space, but on the same principle. The format — those minimal posts that anyone could create with no effort — served that function: ordinary people talking about their lives on an equal footing with other ordinary people.

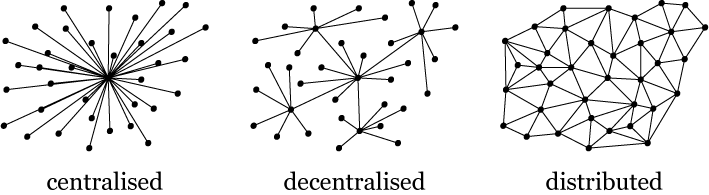

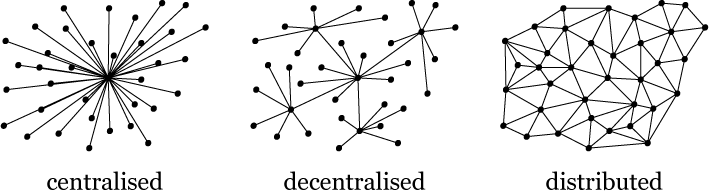

This was, to a large extent, an inheritance from internet culture itself. The thing is that software, in its first iteration, always tries to replicate the hardware it runs on — and in the early days, the internet was conceived as a kind of road network in which many nodes, the computers, connected with one another on equal terms. As a consequence, those first social networks of the 2000s replicated that architecture and conceived of themselves as “distributed.”

But that was a prehistoric vision of the internet — however firmly it remains lodged in some people’s heads. It makes far more sense to think of the internet not as a territory, but as a channel through which a torrent of information flows — created by billions of springs, each one of us. If, instead of thinking about the internet from the hardware perspective — the connected machines — we think about it from the human experience, it becomes clear that the internet is not a road map: it is a river.

The “feed” of each social network is a tributary of that river, but our WhatsApp chats are another, and websites — particularly those of news outlets — are a third, to take three familiar examples. The internet is us; it is humanity. And the best way to describe it is not a static map, but a process, a story — a becoming.

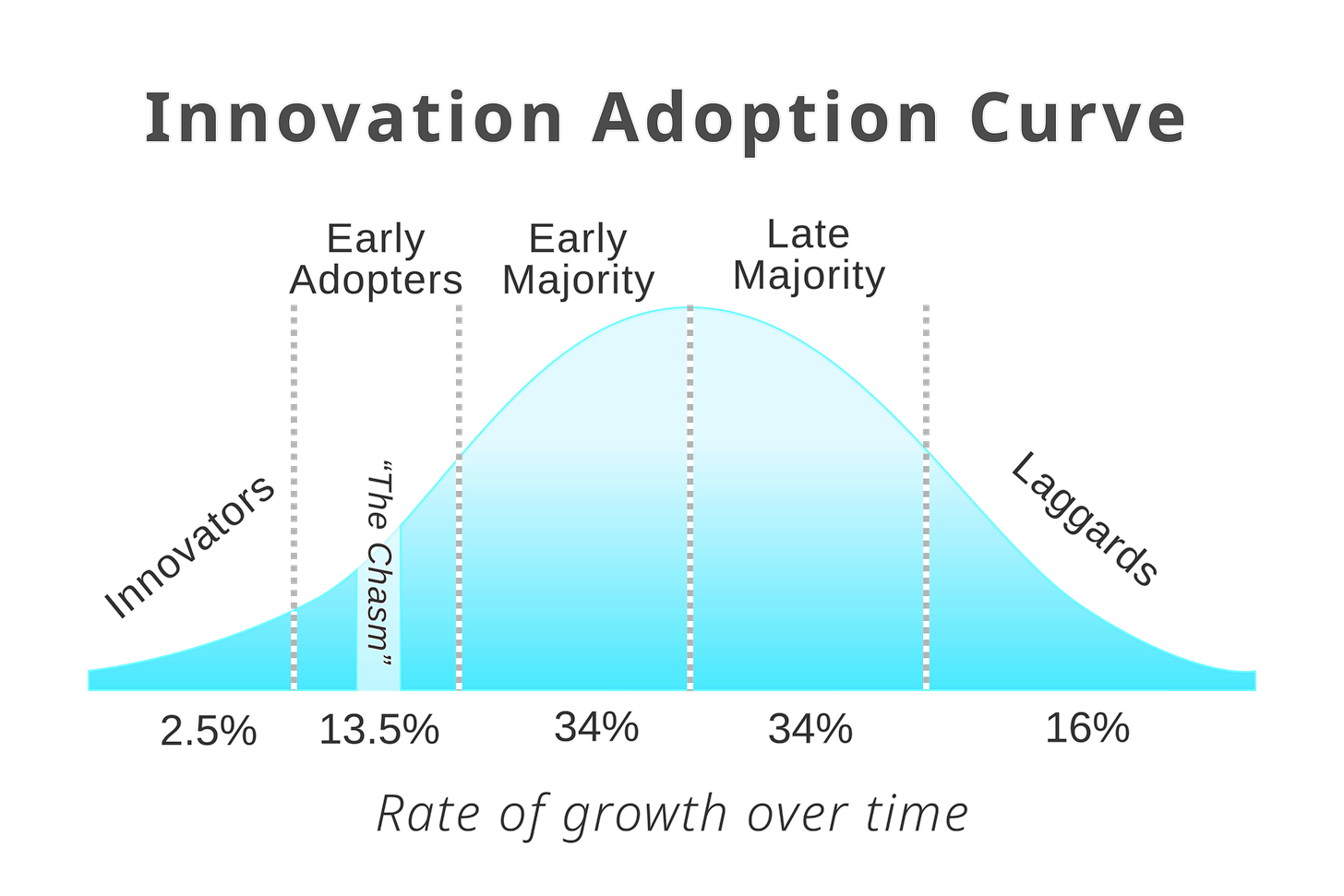

While the river was small, in the early 2000s, it was more or less comprehensible to the human brain. Facebook, at its best, did not contain everyone — only a small slice of society. A group of early adopters willing to invest considerable time in creating content and to modify their behaviour to adapt to the culture of the platform. It was a network of peers.

But over time, Facebook went mainstream and filled up with late adopters who were no longer willing to do any of that. The river became an immense and incomprehensible torrent.

Twitter, which was a far more niche platform, held on for a few more years — but ultimately suffered the same fate. In its early days it was the closest thing to a pub or a living room: a place of trust, an improved version of the many forums that had dominated the internet. But as it filled with late adopters unwilling to adapt to the local culture, it became a cacophony.

Reddit survived — more or less — because it never became entirely mainstream. To this day it remains a relatively closed community of peers, held together by the effort participation requires.

The Algorithm Against Meaninglessness

Faced with that flood of late adopters, Twitter and Facebook encountered a problem. The feed was filling up with updates that held no interest, no importance, and no relevance for those receiving them. The human brain needs to invest a certain amount of time reading each update, and if it is forced to scroll through several that mean nothing to it, it grows bored and leaves.

And so those platforms modified their user experience to prevent that avalanche of irrelevant content from overwhelming them. They stopped displaying posts in the chronological order in which they were published, and began promoting their own version of what mattered. The algorithmic feed was born.

The social media algorithm is known as “predictive.” In essence, what it does is take an immense amount of data and a user’s prior actions, and attempt to infer what they will want to see next.

“Engineers assign a point value to each type of interaction users can perform on a post — liking, commenting, sharing, and so on.

For each post that could potentially be shown to you, these point values are multiplied by the probability that the algorithm believes you will perform that interaction. These multiplied pairs of numbers are added together, and the total is the post’s personalised score for you.

There are a few additional details, but broadly speaking, your feed is created by ranking posts according to these scores, from highest to lowest.”

(This link provides a detailed explanation of how Facebook’s algorithm works, and this one explains how Twitter’s algorithm works.)

At bottom, this is the same thing Google has been doing for twenty-five years — a function without which the internet could never have reached where it is today: ordering an enormous quantity of information that the human brain, on its own, cannot process.

The algorithms of social networks do not have to be the devil. In reality, they serve a function that is genuinely necessary in this interconnected world we inhabit: they explore content far beyond anything you could consume on your own, and attempt to infer which part of that mountain of information actually interests you.

And although the idea has taken hold that they were trying to control what we saw, I remain convinced that was not the case — at least initially. In their early days, social media algorithms were reacting to the fact that chronological feeds had become irrelevant, tedious, and incomprehensible.

If some networks have become cesspools, it is for two reasons. The first is that their users — the versions of themselves they present on those platforms — were seeking out that kind of content: confrontational, violent, or designed to serve as an echo chamber. The most compelling evidence is that while some platforms resemble spittoons, others, like LinkedIn, are a passable imitation of a tea room run by the late Queen of England at Buckingham Palace. If the algorithm were the problem, they would all be equally awful.

The second reason is that certain platforms — to some extent Facebook and YouTube, but above all Twitter — when particular users began exploiting the algorithm for their own political or financial ends, did nothing to correct it. They allowed far-right bots and professional fake news creators to flood users’ feeds and ultimately degrade the platform for everyone.

Of course, the phenomenon of users trying to exploit an application’s internal mechanisms for their own ends was nothing new. Google, on many occasions over the past twenty-five years, has made sweeping changes to its algorithm to correct exactly these kinds of drift. Readers will recall, for instance, that for a time every headline seemed to read something like “This girl went out on the street — you won’t believe what happened next,” and that at another point the internet was flooded with short recipe videos that then vanished as if they had never existed.

Companies whose business is organising information on the internet tend — out of a basic instinct for survival — to make decisions that prioritise the quality of their service over the interests of a few.

And this is what is truly new about the present moment. Mark Zuckerberg took, many years ago, an apparently inexplicable decision: to allow the platform to fill with low-quality content in order to keep charging for advertising. Over time, Elon Musk followed the same path and has ended up turning Twitter into the new Facebook. What both are signalling is that their platforms have failed at that model of creating a “distributed society,” and now have only a captive audience that will last them a few more years — and which they intend to squeeze for everything it is worth.

Peak Algorithm

As a result, these distributed social networks have stopped fulfilling their promise. They are no longer neutral spaces for conversation between equals. Publishing on them has become an extreme sport that exposes you to constant judgment, misunderstandings, and pile-ons. The ease of posting has made it far too cheap to attack those who put themselves out there. And to that must be added the ever more intensive presence of advertising and brand-generated content.

The result has been a silent withdrawal by the very people who once made these networks interesting: fewer and fewer posts, fewer likes, fewer retweets, and ever more passive consumption. The prevailing feeling is that, in order to protect your own identity and mental peace, it is safer today to watch than to speak.

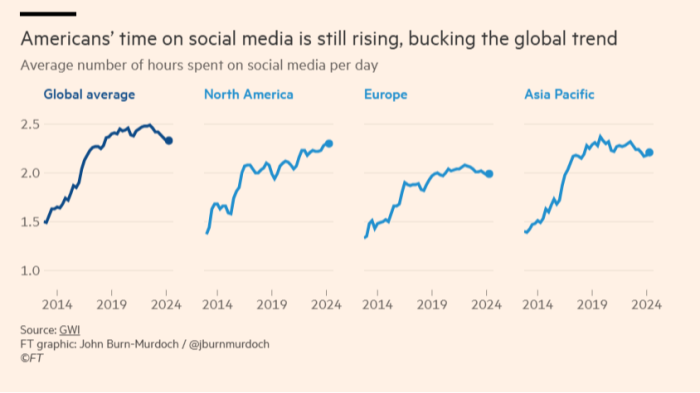

As a result, these networks are dying. Facebook and Twitter’s user bases are in freefall, and social media use in general is declining too — at least outside the United States.

Meanwhile, the function that Facebook once served — keeping us connected with the people we know — has migrated to messaging platforms like WhatsApp and Telegram. The “distributed social network” now exists across multiple personal, private group chats.

And this has happened largely because we discovered years ago that we did not want to share the same updates with our mothers as with our friends — but also because the algorithmic feed culture of distributed networks has failed: it has become unbearable, irrelevant, incapable of making sense of reality and information.

Substack: The Moment of the Tribes

What the continued need for the algorithm ultimately reveals is something fundamental to human experience: we do not want a distributed society where every voice is heard equally. We do not want to listen to everyone. We want to pay attention to the people we find interesting at any given moment. On the contrary, having to engage with anyone who wanders past and fires off a tweet is a terrible waste of time — not unlike having to sift through every possible search result on Google to find the one that matters. It is something we simply cannot afford in a life where we need to stay connected to what is important.

For this reason, the next-generation platforms — TikTok, Substack, and, to some extent, Instagram — no longer share even a trace of that ambition for horizontality. No one expects us all to make videos or write lengthy articles, or to have the best photographs. We have abandoned the aspiration of living in a network of equals. We accept that there will be influencers and content creators whom the rest of us will follow, because they are the ones making the effort to create things of value. The new platforms look far more like a television channel made by many than like what Facebook once intended to be.

The meteoric rise of Substack is the consequence of that trend. This platform is today offering the content and connection to public debate that Twitter provided ten years ago. It is the new public square. Substack is the new Twitter.

Like TikTok, but unlike Twitter and Facebook, what is new about Substack is that it professionalises content creation by demanding a greater effort from anyone who wants to be an author. A 140-character tweet no longer suffices to claim an audience’s attention. By the same logic, mounting a bot campaign out of nowhere to attack an argument on Substack is no longer possible either.

Eighteen years after the birth of Facebook, we are closing the age of open, distributed networks. The experiment by which we gave a voice, simultaneously and without filter, to every person equally has not worked, and it is dying. And my instinct tells me that we are heading toward something that more closely resembles the organic texture of pre-modern human societies: decentralised networks organised around community leaders — a society of tribes.

In the years ahead, I believe we will see a decentralised network emerge — or, put another way, a network with many small centres, likely disconnected from one another. Every influencer of a certain stature will have a community, a group that trusts them and follows them everywhere.

I would wager that the next great platform will no longer be public, but will require an invitation to participate, or some form of credential. I would also wager that there will be far more of them than there are now.

I find myself wondering whether, after the tribes, something resembling nation-states might also emerge on the internet. But before that, we will have to confront another challenge: that of holding together a society that will no longer have shared public spaces, but a decentralised network in which each of us belongs to our own tribe.

If you’re interested in technology, you’ll like Hijos del optimismo (Children of Optimism). It’s my first book, the older sibling of this newsletter, and a project I’ve been working on for many years.

You can already order Hijos del Optimismo on Amazon, La Casa del Libro, El Corte Inglés and the publisher’s website, Debate.

You can also read more about me and the story that inspired me to write it